Introduction

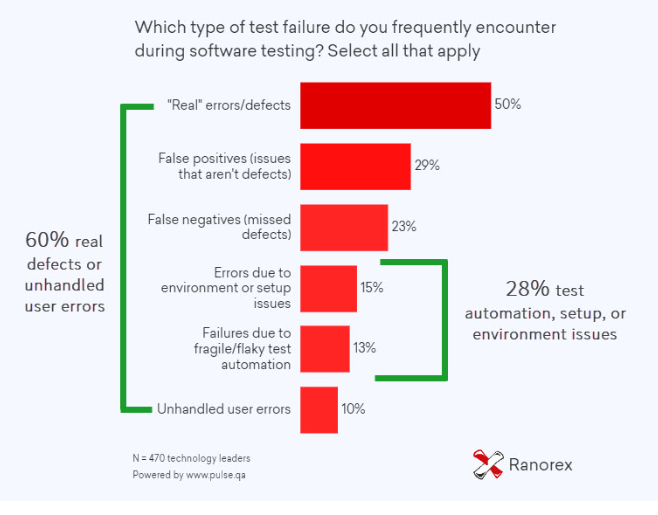

The advantages of automated mobile testing are many and well known. Conducting tests from the very beginning of the Software Development Life Cycle can result in early uncovering of bugs so that they can be fixed quickly and with minimum expenditure. Overall, testing yields benefits and saves resources for the company. However, to achieve an efficient automated test result, it is also necessary to understand when failures can happen. With testing, there can be several reasons why it won’t work out as planned. As shown in the graph below, 28% of test failures occur due to automation, environment, and setup issues.

Not planning ahead

Not planning for a test to be implemented can doom it from the beginning. There must be a test plan which lays out the aim of the Quality Assurance team at the outset. For instance, the following decisions must be made by the respective teams beforehand:

- Which tests should be automated?

- How should the progression towards the Continuous Integration (CI) pipeline be made?

- Which of the automation test case candidates are:

- Regression Test Cases or Repetitive Test Executions

- Test cases that are prone to human flaws

- Application functionalities that can go through changes

- Test case scenarios that are time-consuming

Collect regular feedback and construct gradually to have a well-ordered CI pipeline. Starting with simple automation tasks and making the way up to complex tasks is a good idea.

Not having enough information

It is especially disadvantageous when the decision-makers at the forefront don’t have the necessary information about their automation tests or the teams which execute the tests. Such decision-makers must know their teams because:

- It helps them understand the recommendations and concerns of the automation engineers.

- It helps them gauge the skills of the automation test engineers.

Having unrealistic expectations

Testing can never be all-exhaustive. It is not possible to automate every functionality of the Software. One of the very functions of automation tests is the benefit of saving some time for functional testing. When engineers test things that do not require it, time consumption is more on updating test scripts and automation in modules that would have functioned fine without them. An experienced judgment or a detailed plan regarding what tests are required can avoid automation testing failure.

Not executing parallel, independent tests

Compared to sequential testing, executing multiple parallel test scripts saves time. Testers often decide to conduct sequential tests instead of doing them simultaneously. This decision can slow down the entire process.

It is also true that multiple tests can be executed when they are all independent. Dependent tests are a common reason why test automation fails. Independent tests pass or fail on their merit and have no impact on the reports of other tests. On the other hand, dependent tests may fail because of the failure of previously executed tests. Cascading failures occur in this way as tests fail in a domino effect.

Not having test reports

Not having a report for automation tests or not paying enough attention to it is a mistake that can lead to automation testing failure. Test reports help engineers analyze the results and investigate each defect to try and resolve it. Some testing strategies fail because their team is overlooking test reports.

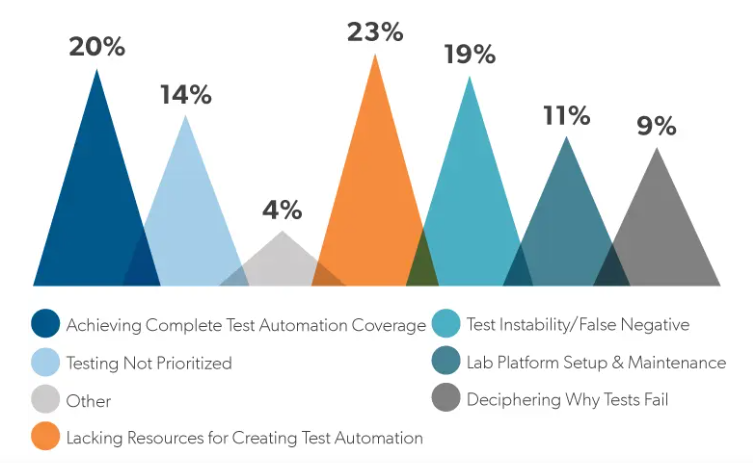

According to research, 9% of development teams found testing difficult simply because deciphering the reason behind the failure is difficult. The presence of reports can solve this issue to a great extent.

Not selecting the correct tools

Choosing the tool which fits your automated mobile testing requirements is of utmost importance. There are several different kinds of tools available in the market. Considering the requirements, the expectations, the application, and the cost are essential even before deciding to select one. Different automation tools have specialties, for instance:

- Appium works well with hybrid applications for iOS, Android, and Windows.

- TestNG is known for a testing framework with several assertions to verify results.

- Selenium WebDriver is for the automation of web browsers for UI testing.

- Rest Assured has a library for testing RESTful Web Services.

Organizations must be aware of such particular strengths when arriving at decisions regarding the tool to choose.

Overlooking the presence of Bugs

To understand this, dividing it up into three sections could be helpful.

- Cross-browser adaptability bugs: Applications are tested for cross-browser compatibility. However, unless taken seriously, testing endeavors can fail. Loss of revenue and an unpleasant end-user experience can come from such oversight.

- Form validation bugs: These get ignored since they are considered a low-priority issue. But erroneous forms can show errors when invalid characters are entered and lead to malfunctions. Developers must adhere to the following practices when setting up validation logic:

- Limiting the number of characters for fields like Zip Code, telephone number, and ID.

- Defining the maximum acceptable length of alpha-numeric passwords users can set. Special characters, if permitted, must be defined as well.

- Usability bugs: the primary motive of testing is to make an application user-friendly. If there are bugs and issues in the end-user interface and functionalities, then the whole purpose of the enterprise is defeated. Easy navigation and a readable interface must be prioritized by developers.

Overlooking inconsistencies across Devices

Responsive applications are a necessity. If there are inconsistent page layouts across different devices, the user experience may suffer, leading to loss of actual and potential customers. Different screen sizes should not be a hindrance to the promise of a seamless experience. Developers must have targeted research on audiences to have optimized automated mobile testing.

Not having manual testing

Avoiding manual tests in favor of only automated testing is not wise. It is important to strike a balance between test automation and manual tests; the inability to reach this may lead to test automation failure. Automation testing should make it possible for manual tests to focus on areas in need of such intervention and not replace them.

Lack of automated regression testing

Teams must not overlook the need to administer automated regression testing, especially when new functionalities are added to their applications. An intelligent approach to delivering regression insights is a necessity in the economic climate of the day. Analyzing changes across new applications, OS releases, future additions, and locations is also an essential, often missed step. This can help deal with degradation issues for every new build.

Conclusion

There is no debate about the benefits of automated mobile testing. They outweigh the disadvantages. However, QA teams must ensure that they do not make common mistakes in administering tests. As long as planned, informed decisions are made by individuals aware of the necessary guidelines; automated tests can be of great use to enterprises.